Machine Learning

A couple of years ago, a newly hired developer at eFlex Systems showed me this new Google development called Teachable Machine. By multiple snapshots of each, the system could easily distinguish between, say, an apple and a banana. Or someone with glasses on or off. It was pretty sweet and easily doable. It had potential.

I encouraged the new dev to take it upstairs to show the bosses. He did—and got some time allocated to do some preliminary research and development... of which the dev kind of lost interest, and it fell by the wayside. However, early this year I picked up the mantle, tested, and mocked up such results to develop further.

The possible scenario for integrating into our software might be to error-proof. Are all the parts in a kit present? Was the right part added? Was it aligned properly? Etc. This Google resource easily handles some very basic distinctions, and my personal testing revealed it can be very precise. And very easily so.

What Teachable Machine does is allows one to take multiple snapshots of an item, in different positions and perspectives. The more shots the better. It also allows one to attribute different images for this or that. Or audio files. Or text-to-speech. This actually gives a lot of capability to this free resource.

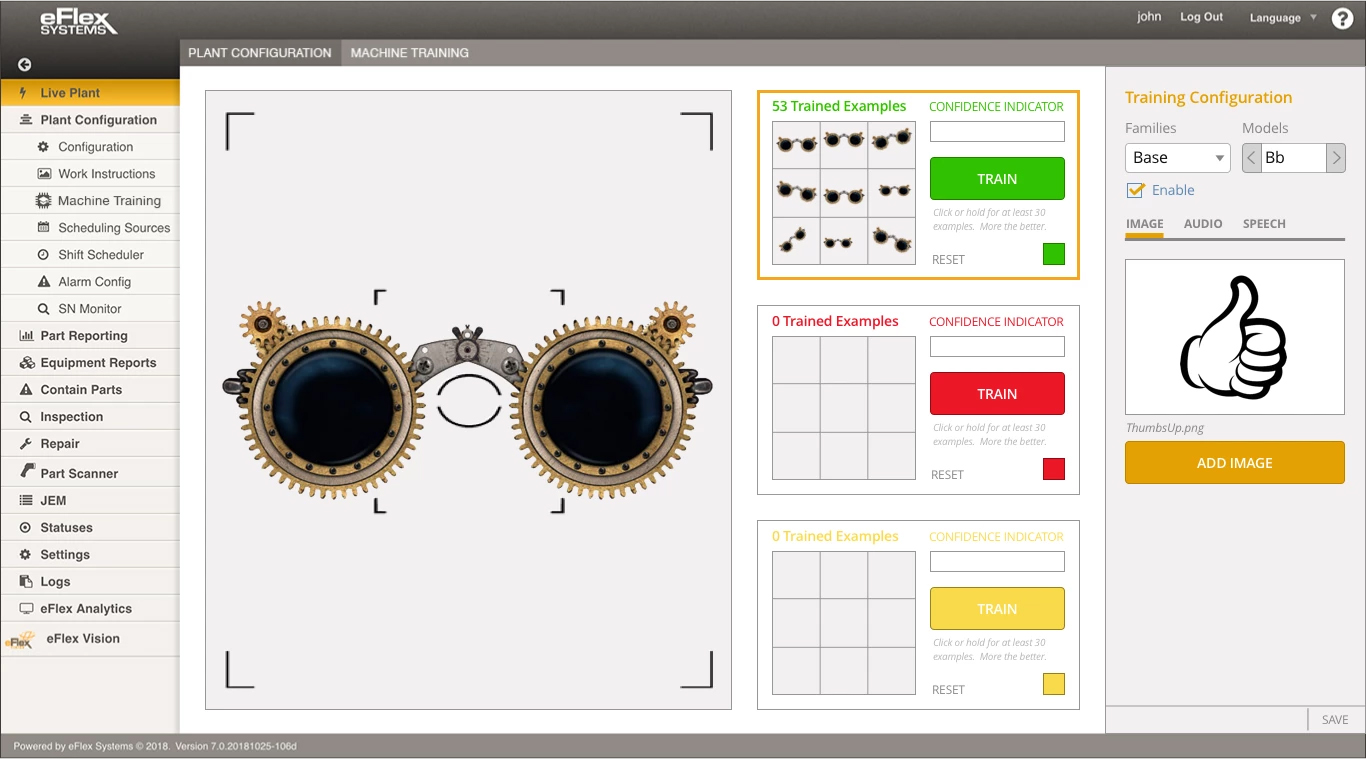

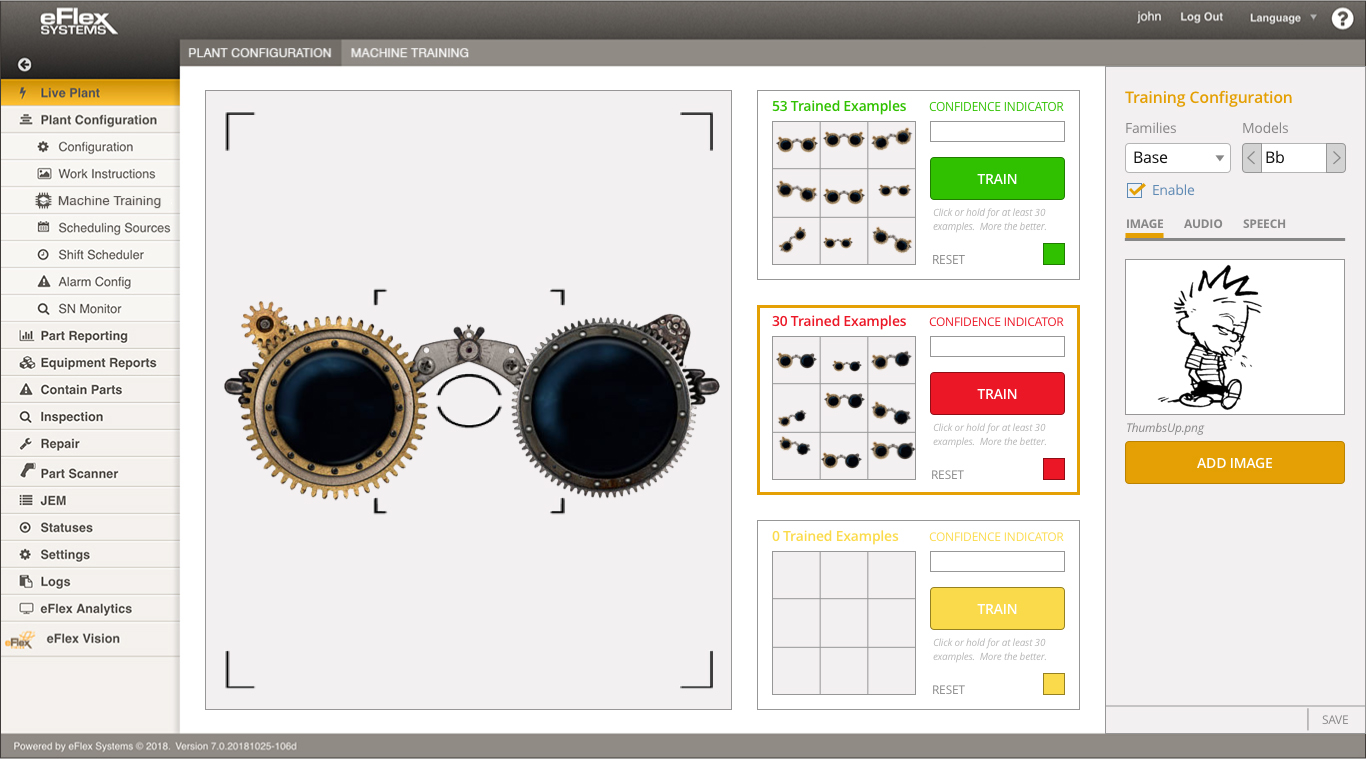

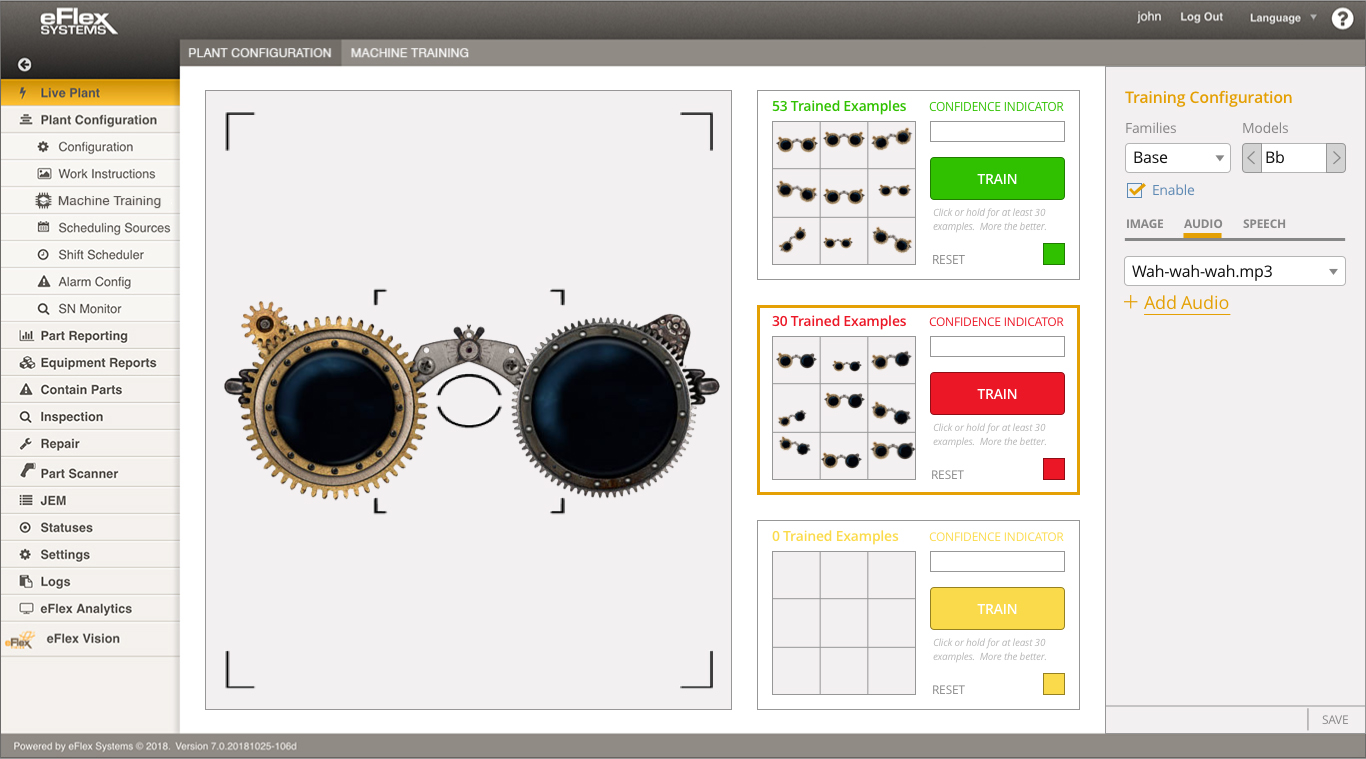

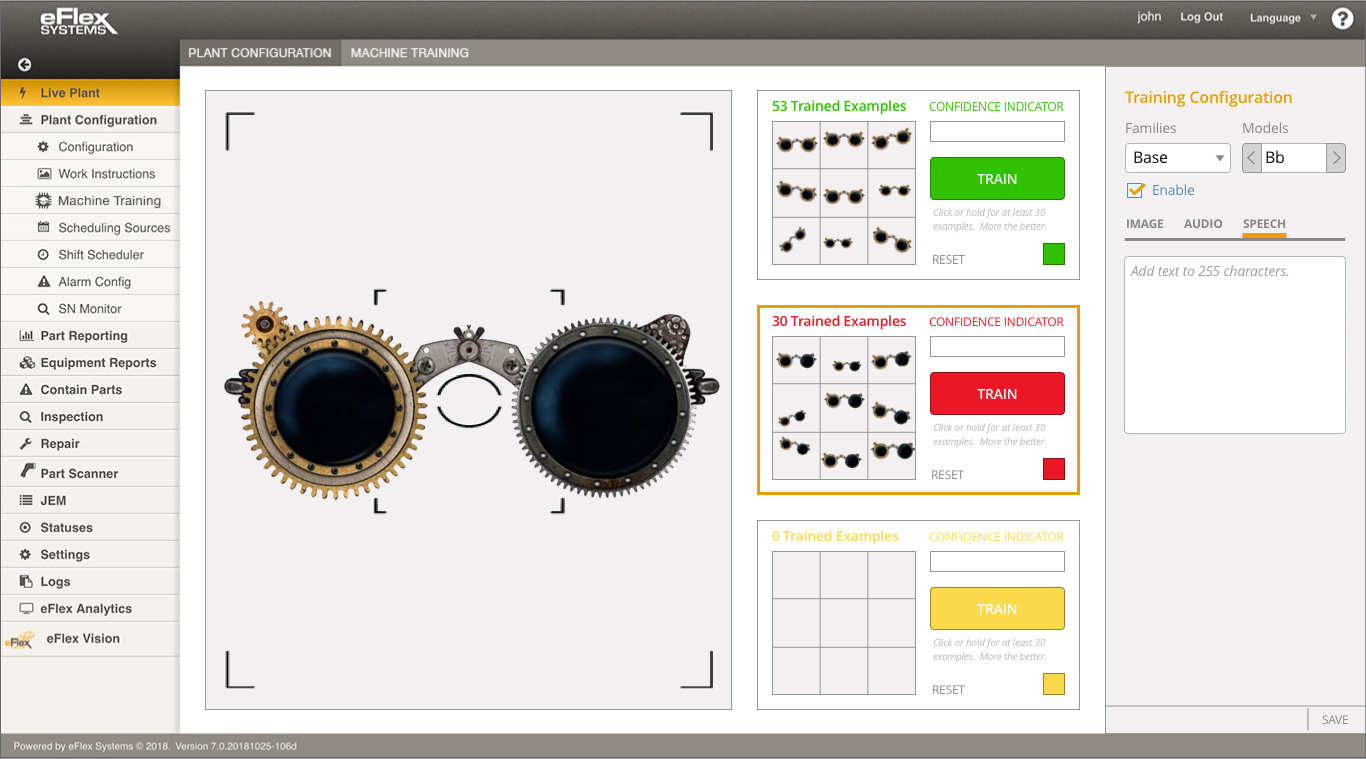

In this right-out-of-the-box example—a simple teaching to identify for a correct part and for an incorrect part. Each could have any of three outputs for recognition of the part. An image specifying correct or incorrect. An audio file that sounds. And/or a text-to-speech message.

This mockup shows an interface of Google's Teachable Machine within the eFlex environment.

This mockup shows an interface of Google's Teachable Machine within the eFlex environment.

Correct part. Take dozens of shots at different angles. Set image, audio, and speech reactions.

Correct part. Take dozens of shots at different angles. Set image, audio, and speech reactions.

Incorrect part. Dozens of varied angled shots. Image set.

Incorrect part. Dozens of varied angled shots. Image set.

Audio uploaded and selected.

Audio uploaded and selected.

Text-to-speech output.

Text-to-speech output.

Text entered.

Text entered.

Theoretically, any number of choices could be added to make for a very inexpensive identification system. Or counting system. Tracking. Or as shown here, error-proofing.